The Forest View — TL;DR

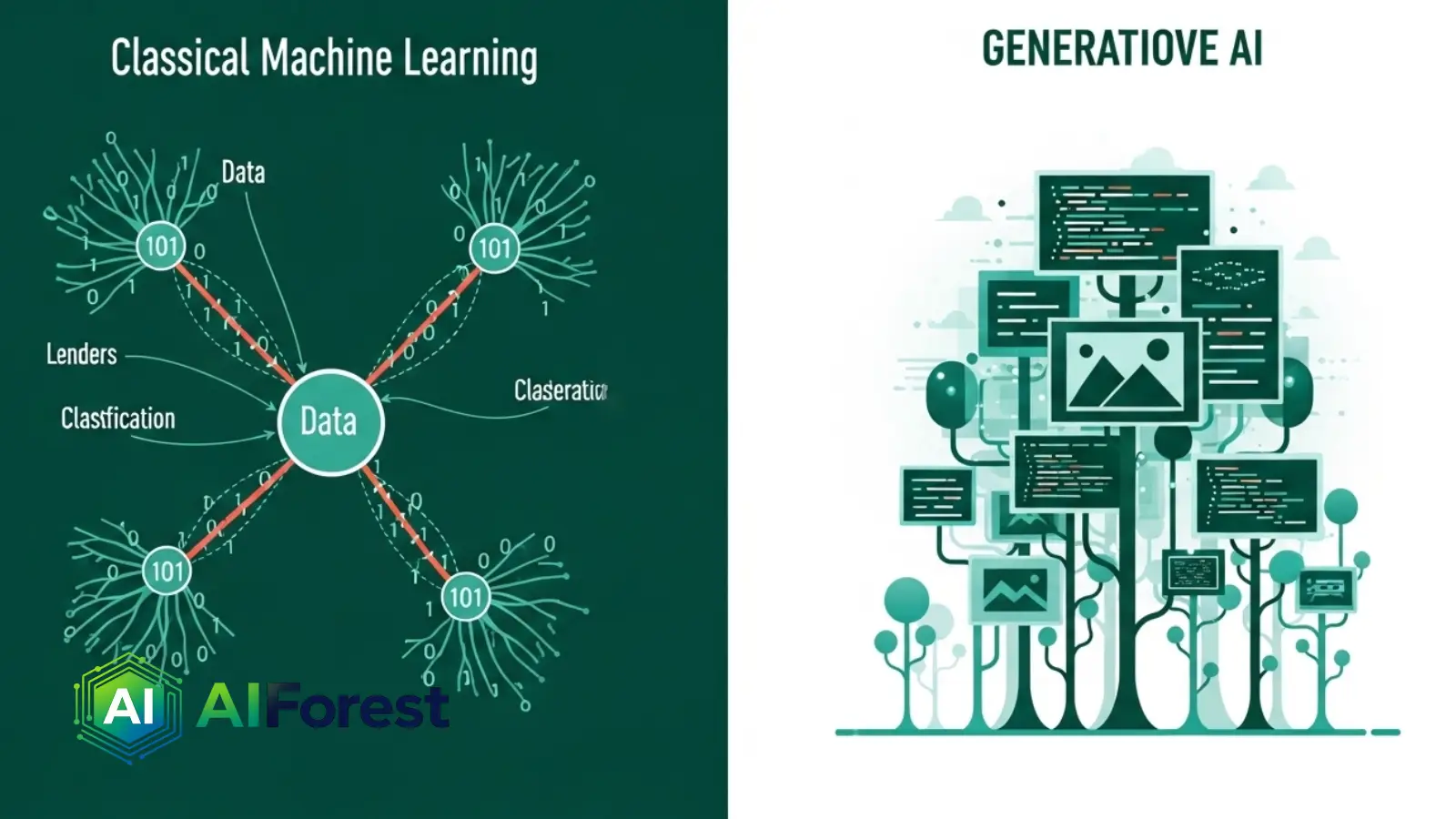

- Machine learning teaches systems to recognize patterns and make predictions from labeled or unlabeled data — it classifies, ranks, and decides.

- Generative AI is a branch of ML that goes further: it produces entirely new content — text, images, audio, code — that did not exist before.

- In 2026, both are deeply embedded in enterprise workflows, but they solve fundamentally different problems and carry distinct risks.

Why the Distinction Matters Now More Than Ever

By early 2026, over 78% of Fortune 500 companies had deployed at least one AI system in a core business function — yet internal surveys consistently show that fewer than a third of employees can accurately explain what that system actually does under the hood. That gap is no longer just an intellectual inconvenience; it shapes hiring decisions, regulatory compliance strategies, and billion-dollar product bets.

The terms generative AI and machine learning are used interchangeably in boardrooms and headlines, but they describe different layers of the same technological stack. Confusing them leads to misaligned expectations, poor tool selection, and — increasingly — regulatory exposure.

This article draws a precise line between the two, using current tools and real-world applications as anchors.

What Machine Learning Actually Is

Machine learning (ML) is a subset of artificial intelligence in which a system learns statistical patterns from data, then applies those patterns to make predictions or decisions on new, unseen inputs — without being explicitly programmed for each case.

The classic ML workflow is: collect labeled data → train a model → evaluate performance → deploy for inference. Think spam filters, credit scoring engines, medical imaging classifiers, and product recommendation systems.

ML models are fundamentally discriminative: they draw boundaries. Given an input, they output a label, a score, or a decision. They do not create.

Core ML disciplines

- Supervised learning — trained on labeled data; outputs predictions (e.g., fraud detection)

- Unsupervised learning — finds hidden structure in unlabeled data (e.g., customer segmentation)

- Reinforcement learning — learns through reward signals; used in robotics and game-playing agents

What Generative AI Actually Is

Generative AI is a specific branch of machine learning that trains models to produce new content — text, images, audio, video, code, or synthetic data — rather than simply classify or predict existing inputs. The word “generative” refers to the model’s capacity to sample from a learned distribution and produce outputs that are novel, not retrieved.

Modern generative AI is built on architectures like transformers (for language and code), diffusion models (for images and video), and variational autoencoders. These models learn the statistical shape of vast datasets, then generate new samples that fit that shape.

In practical terms: every time you prompt Claude, Gemini, GPT-4o, Midjourney, or Sora, you are interacting with a generative AI model. The outputs are synthesized, not searched.

“Generative AI does not retrieve — it constructs. That single distinction reshapes every downstream question about accuracy, authorship, and accountability.”

Generative AI vs Machine Learning: Direct Comparison

| Dimension | Classical Machine Learning | Generative AI | Example Tools (2026) |

|---|---|---|---|

| Primary goal | Classify, predict, or decide | Create new content or data | Scikit-learn vs Claude Sonnet 4 |

| Output type | Label, score, probability | Text, image, audio, code | XGBoost vs Midjourney v7 |

| Training data | Labeled datasets (tabular, structured) | Massive unlabeled corpora (web, books, images) | AWS SageMaker vs Google Vertex AI |

| Model architecture | Decision trees, SVMs, gradient boosting | Transformers, diffusion models, VAEs | H2O.ai vs OpenAI API |

| Interpretability | Generally higher (SHAP values, feature importance) | Generally lower (black-box reasoning) | LIME / SHAP vs Anthropic Interpretability |

| Hallucination risk | Low — constrained output space | High — probabilistic free-form output | Key difference for regulated industries |

| Compute cost | Moderate training, low inference | Very high training, moderate inference | Cost gap narrowing in 2025–26 |

| Typical use cases | Fraud detection, churn prediction, diagnostics | Copilots, content creation, code generation | Stripe Radar vs GitHub Copilot |

How Both Technologies Are Converging

The clean theoretical boundary between ML and generative AI is already blurring in practice. Foundation models — the large pretrained systems that underpin most generative AI — are increasingly being fine-tuned for classical ML tasks like classification, anomaly detection, and forecasting.

At the same time, traditional ML pipelines are incorporating generative components for synthetic data generation — using models like GANs or diffusion systems to create training data for downstream classifiers. The two disciplines are not rivals; they are layers in the same stack.

What this means practically: a single enterprise AI deployment in 2026 might use an ML model to detect fraud, a generative model to draft the fraud alert message, and another ML model to classify whether the alert should be escalated. Architectural literacy matters more than ever.

Impact on Jobs, Ethics, and Human Creativity

The labor question is more nuanced than headlines suggest

Classical ML automates decisions — it displaces roles built around repetitive judgment calls: underwriters, radiologists reviewing routine scans, tier-1 support analysts. The disruption is real but concentrated in specific function types.

Generative AI automates production — it compresses the cost of creating first drafts, initial code, design mockups, and synthetic datasets. This threatens different roles: junior copywriters, entry-level developers, stock illustrators. But it also expands what small teams can produce without scaling headcount.

The ethics divide is equally distinct

ML bias manifests in discriminatory outcomes — a hiring model that penalizes certain zip codes, a loan model that disproportionately rejects minority applicants. Generative AI bias manifests in representational distortions — who appears in AI-generated images, whose dialect is corrected, whose history is summarized with gaps.

Both are serious. Neither is solved. The EU AI Act (fully enforced from August 2026) treats high-risk ML systems and general-purpose AI models as separate regulatory categories — a distinction that tracks the ML/generative divide almost exactly.

Human creativity is being redefined, not eliminated

The creatives and analysts gaining ground in 2026 are those who treat generative AI as a production accelerant and ML as an insight engine — not those who resist both, and not those who defer entirely to either. The highest-value skill is knowing when to trust the model and when to override it.

Where This Lands

Forest Architect’s Take

Machine learning and generative AI are not competing paradigms — they are different tools solving different problems, increasingly deployed together. ML excels where decisions need to be defensible, auditable, and constrained. Generative AI excels where volume, variation, and creative synthesis are the bottleneck. The organizations winning in 2026 are not those that picked one over the other — they are those that built the internal capability to distinguish between the two and deploy each precisely. That distinction starts with language. Get the words right, and the strategy tends to follow.

FAQs

No — generative AI is a subset of machine learning. All generative AI systems are built on ML principles, but most machine learning systems are not generative. Classical ML classifies and predicts; generative AI creates new content. The relationship is similar to how all squares are rectangles, but not all rectangles are squares.

It depends entirely on the problem. If you need to predict outcomes or detect anomalies from structured data (sales forecasting, fraud detection, churn prediction), classical ML is more reliable, interpretable, and cost-effective. If you need to generate content, automate writing, or build conversational interfaces, generative AI is the appropriate tool. Most mature enterprise deployments in 2026 use both in the same pipeline.

Not to use them casually — but yes if you are deploying them in a professional or regulated context. Understanding that generative AI outputs are probabilistic, not factual, and that bias enters through training data, is foundational knowledge for anyone making decisions based on AI-generated content or building AI