The “Forest View” (TL;DR)

- Deep learning is a subset of machine learning that uses layered neural networks to learn patterns directly from raw data—no manual feature extraction required.

- It powers the most consequential AI systems of 2026: multimodal assistants, autonomous vehicles, medical diagnostics, and generative media.

- Understanding deep learning is no longer optional for professionals in tech, business, or policy—it is foundational literacy.

The Number That Changes Everything

In 2026, over 85% of all new AI deployments in Fortune 500 companies use deep learning at their core. That is not a projection—it is a benchmark from this year’s IBM Global AI Adoption Index. The question is no longer whether deep learning matters; it is whether you understand it well enough to work alongside it, compete with it, or build with it.

This guide cuts through the noise. No jargon walls. No false simplicity. Just a clear, grounded explanation of what deep learning is, how it actually functions, and why it sits at the center of every serious AI conversation in 2026.

What Is Deep Learning?

Deep learning is a method of machine learning inspired by the structure of the human brain. It uses artificial neural networks—layers of interconnected mathematical nodes—to identify patterns in data.

The word deep refers to the number of layers in the network. A shallow network might have two or three layers. A modern large language model can have hundreds. Each additional layer allows the system to learn increasingly abstract representations of the input.

Unlike traditional programming, you do not write explicit rules. You feed the system data, and it learns the rules itself.

How Neural Networks Actually Work

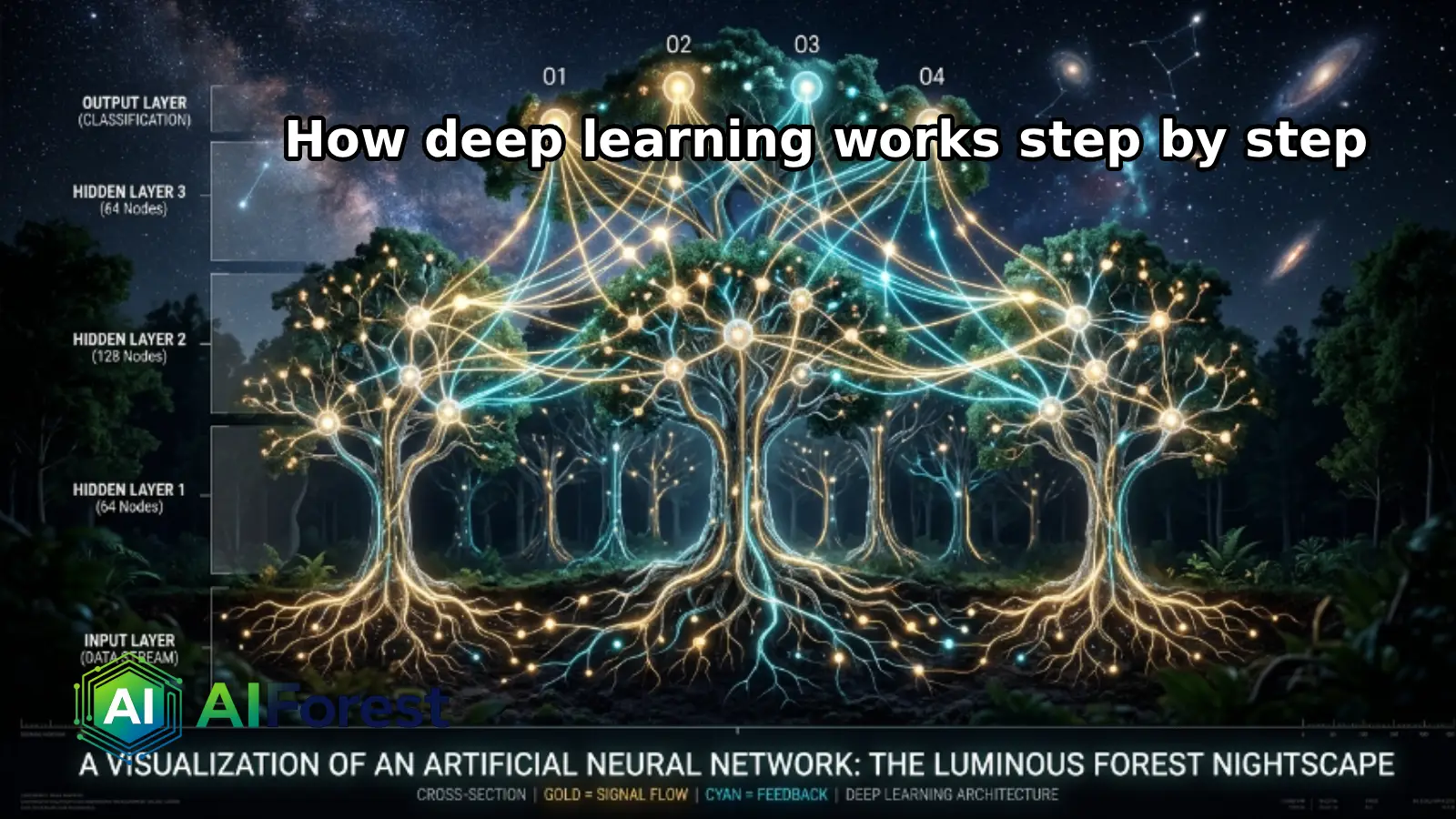

The Three-Layer Foundation

Every deep learning model starts with the same basic architecture:

- Input Layer — Receives raw data (pixels, text tokens, audio waveforms).

- Hidden Layers — Process and transform the data through weighted mathematical operations.

- Output Layer — Produces the result: a classification, a prediction, a generated token.

Each connection between nodes carries a weight—a number that determines how much influence one node has on the next. Training a neural network means adjusting these weights until the model’s outputs match the expected results.

Forward Propagation

Data flows forward through the network. Each node applies an activation function—a mathematical formula that decides whether to “fire” and pass information onward. This mimics, loosely, how biological neurons behave.

The output of one layer becomes the input of the next. By the final layer, the raw data has been transformed into a meaningful result.

Backpropagation and the Learning Loop

This is where learning actually happens. After each prediction, the model calculates its error (the difference between its output and the correct answer). It then works backward through the network—a process called backpropagation—adjusting weights to reduce that error.

This cycle repeats millions or billions of times. That is training. The model does not memorize answers; it learns how to find answers.

Deep Learning vs. Machine Learning vs. AI

People use these terms interchangeably. They are not the same thing.

| Term | What It Covers | Example |

|---|---|---|

| Artificial Intelligence (AI) | Any system that mimics human cognitive tasks | A chess-playing program from 1997 |

| Machine Learning (ML) | AI systems that learn from data using algorithms | Spam filters, recommendation engines |

| Deep Learning (DL) | ML using multi-layered neural networks on large datasets | GPT-4o, DALL·E 3, AlphaFold 3 |

Deep learning is a tool within machine learning, which is a method within AI. The distinction matters because not every AI problem requires deep learning—and choosing the wrong method wastes significant resources.

The Main Deep Learning Architectures You Need to Know

Convolutional Neural Networks (CNNs)

Built for visual data. CNNs scan images in small patches, detecting edges, textures, and shapes before combining these signals into full object recognition. They power facial recognition, medical imaging, and autonomous vehicle perception.

Recurrent Neural Networks (RNNs) and LSTMs

Designed for sequential data: text, audio, time-series. These networks have a form of memory—earlier inputs influence how later inputs are processed. Long Short-Term Memory (LSTM) networks solved the “forgetting problem” that plagued early RNNs.

Transformers

The dominant architecture since 2017. Transformers process entire sequences simultaneously using a mechanism called self-attention, which lets the model weigh the relevance of every word (or token) to every other word at once. Every major language model—GPT, Claude, Gemini—is built on this foundation.

A Comparison: Three Leading Deep Learning Frameworks in 2026

| Framework | Primary Strength | Best For | 2026 Status |

|---|---|---|---|

| PyTorch | Flexibility, research-grade control | Academic research, custom architectures | Industry standard |

| TensorFlow / Keras | Production deployment, Google ecosystem | Enterprise ML pipelines | Mature, widely adopted |

| JAX | Speed, hardware acceleration (TPU/GPU) | Large-scale training, bleeding-edge research | Rapid growth |

PyTorch remains the preferred choice for researchers. TensorFlow dominates enterprise pipelines with its robust serving infrastructure. JAX is gaining ground fast, particularly in organizations training frontier models at scale.

Real-World Applications That Run on Deep Learning Today

Deep learning is not theoretical. It operates inside systems you interact with every day:

- Natural Language Processing — Every major AI assistant, from Claude to ChatGPT, uses transformer-based deep learning to understand and generate text.

- Computer Vision — Radiology AI systems now detect certain cancers from scans with accuracy comparable to senior clinicians.

- Drug Discovery — DeepMind’s AlphaFold 3 has predicted protein structures for over 200 million known proteins, cutting years off pharmaceutical research timelines.

- Autonomous Systems — Self-driving vehicles use CNNs and sensor fusion models to interpret their environment in real time.

- Generative Media — Diffusion models, another deep learning architecture, power image and video generation tools.

The Human Root: Jobs, Ethics, and What Deep Learning Cannot Do

The Jobs Question

Deep learning automates pattern recognition at industrial scale. Roles built on repetitive classification tasks—data entry, basic radiological reads, document review—are under genuine pressure. But the system creates demand too: AI trainers, model auditors, prompt engineers, and ML infrastructure specialists are among the fastest-growing roles in 2026.

The more accurate framing is not replacement but displacement and redistribution. Skills that remain resistant to automation include contextual judgment, ethical reasoning, stakeholder communication, and creative direction.

The Ethics Problem

Deep learning systems learn from data. Biased data produces biased models. This is not a theoretical risk—it has caused documented harm in hiring algorithms, criminal sentencing tools, and facial recognition systems deployed in public spaces.

In 2025, the EU AI Act came into full enforcement, mandating transparency and human oversight for high-risk AI systems. The US followed with sector-specific guidance from NIST. Governance is now part of the deployment conversation, not an afterthought.

What Deep Learning Cannot Do

It cannot explain its reasoning in human-interpretable terms without additional tooling. It cannot generalize reliably to situations it has never encountered. It does not understand; it approximates. That distinction matters enormously in high-stakes environments like law, medicine, and infrastructure.

The Verdict

Deep learning is the most consequential technology methodology of the past decade—not because it is perfect, but because it is capable in ways that previous approaches were not. The systems it powers are improving faster than most organizations can adapt to absorb them.

The practical opportunity in 2026 is not to master deep learning as an engineer (though that remains valuable). It is to understand it well enough to ask the right questions: What data trained this? What was it optimized for? Where does it fail? Literacy, not expertise, is the immediate need.

The organizations and individuals who engage seriously with these questions will be better positioned than those who treat deep learning as a black box to either fear or blindly trust.

FAQs

Machine learning is the broad practice of building systems that learn from data. Deep learning is one specific method within that field—it uses neural networks with many layers to learn from large, complex datasets like images, audio, or text. Think of machine learning as the category and deep learning as one of its most powerful tools.

Generally, yes. Deep learning models require substantial training data to perform well because they learn patterns statistically. However, techniques like transfer learning allow developers to take a model pre-trained on a massive dataset and fine-tune it with far less data for a specific task. This has significantly lowered the data barrier for many applications.

Not exactly. A neural network is the architecture—the structure of interconnected nodes. Deep learning is the approach—using neural networks with many layers, trained on large datasets with modern optimization techniques. All deep learning uses neural networks, but a simple two-layer neural network is not typically called deep learning.